hjvt::blog::PCMG #1

PC Music Generator

How it started

Pcmg started in 2020, as a primitive little tool that just wrote raw PCM samples to a file. The file name was even hardcoded! That's where it originally got the name - PCM Generator. Later on I added live playback, then primitive MIDI support, and then things accelerated and now it's a real synthesizer with a GUI and all, so PCMG had to get a new deciphering: it now stands for PC Music Generator.

Initial architecture

When I started making a real synth out of it, threads seemed like an obvious choice: audio generation is latency-sensitive, so running it on a separate thread seemed like a no-brainer. Therefore, initial architecture looked like this:

main thread (ui)

|

|

midi callback ---- audio thread ---- cpal callback

Then I started working on a WASM build and things got a little out of hand. At first I was set on preserving this architecture and went off to research web-workers, which seemed like a way to do threads in the browser. My first attempts failed miserably:

trunkcouldn't even serve workers due to the lack of SSL and a way to set necessary headers, namelyCross-Origin-Opener-PolicyandCross-Origin-Embedder-Policy- Then it was silently failing to run the workload. This was on me, I didn't realize at first that the worker had to be spawned with the same WASM module, because of relocations.

- After i got it to run at all, I started banging my head against next issue:

cpalcould not even enumerate devices when working off the main thread. I had to apply custom patches to it to get it to work with+mutable-globals,+atomicstarget features. So, in the end, I realized I had to change my approach.

New architecture

Since both cpal and midir are callback based, it is actually possible to run them concurrently without threads at all, so I moved my audio thread code directly into cpal callback and used a single-threaded non-blocking queue based on epaint::mutex::Mutex (yes, despite being called that, it is non-blocking on wasm32 target).

I connected my egui app and both of the callbacks with a couple STQueue structures defined as such:

- One for UI messages

- One for MIDI messages

- And a similar structure for passing a few latest samples back to the UI for waveshape visualisation:

This actually works flawlessly, although you sometimes get choppy sound when actively interacting with the UI.

UI rewrite

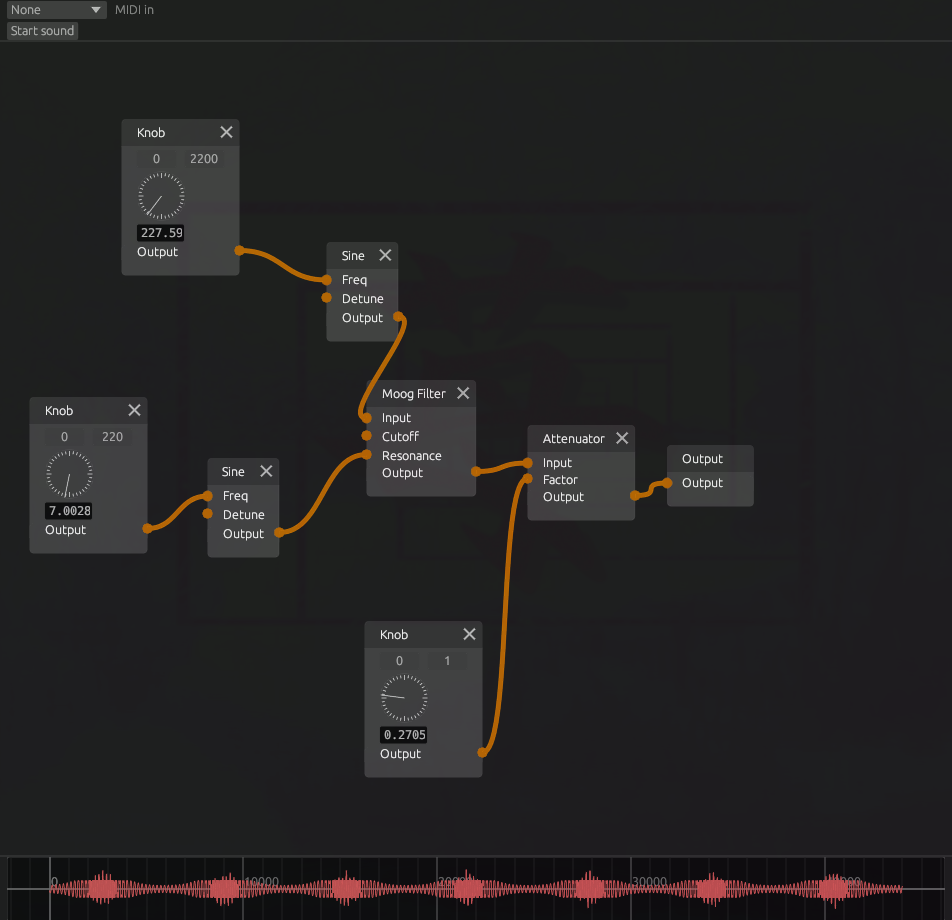

By this point I had a graph UI based on egui_node_graph. It was working fine, but had a couple quirks.

- Firstly, I saw no way to both have knobs and connections leading to the same inputs of each audio device

- Secondly, each device node had to be defined in code

- Lastly, and probably most importantly, it didn't look and feel like a synth. It was cool and functional, but didn't give me that analogue feeling, y'know.

And so, I started blasting out code. At the point of writing, the ui-rewrite branch has 101 commits and is 56 commits ahead of master.

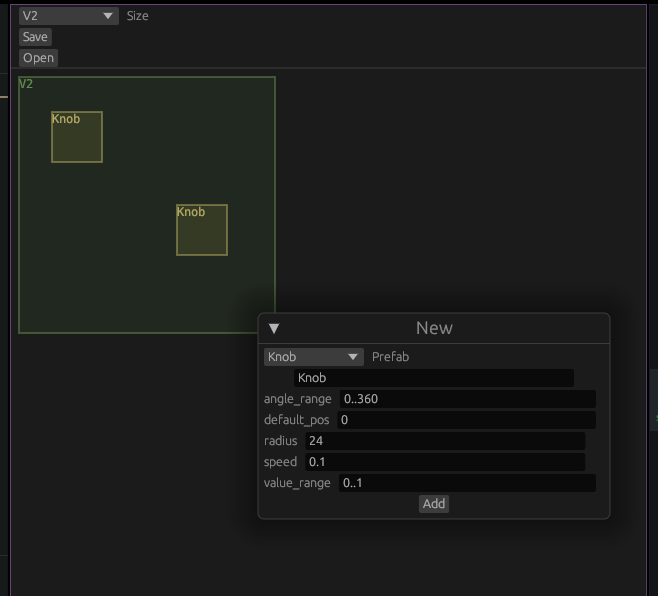

My first aim was to get a way to define all the knobs and synth modules outside code, so I whipped up god-awful widget and module editors.

Then I started to work on UI layout for the synth.

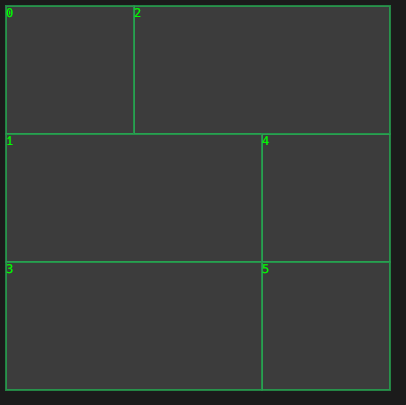

I wanted to have modules of a few standard sizes that could fit together on a grid.

My first and, so far, only idea on how to do that was to reach out for a quad tree data structure. I put my modules in areas inside a qtree trying to keep them in a tight square anchored at top left, and it gave me pretty good results.

As an aside, I was annoyed enough with the library I found for working with quad trees, that I started contributing to it.

Lookin' good, agent York!

Lookin' good, agent York!

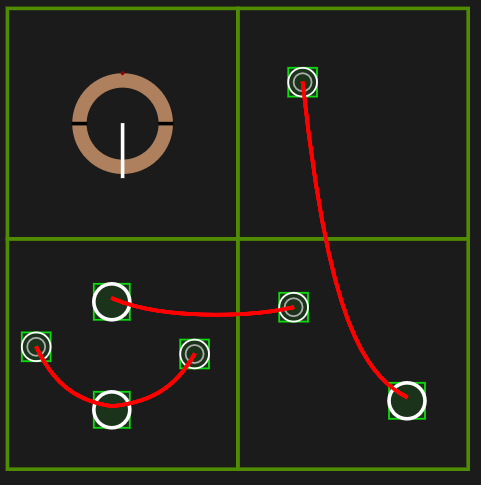

My next aim was making connections look good. So I googled, and there's this thing called catenary curve, that describes shape formed by a chain when left to hang between two points. So I went ahead and implemented the formula for it to draw my wires. The first results were a bit wonky, and it, ashamedly, took me about 4 hours to properly translate the math into Rust code.

The result was definitely worth it though!

The result was definitely worth it though!

After this I set my sights on implementing the backend device graph.

The control graph

As it is now, the graph contains following entities:

- Devices, those are actually nodes of the graph

- Modules, the visual grouping of knobs, CV plugs and other controls

- Actual graph: mappings between node edges and device parameters This graph is then compiled into a simpler directed graph focusing on the output node. That graph is then compiled into a linear bytecode for the audio pipeline to know in which order to sample devices and what to do wit them. This last thing sounds complex, but actually there are only three instructions:

Sample. Take one of the outputs of a device and store itParametrise. Take the stored sample and feed it into an input of another deviceOutput. take the stored sample and output it for the world to hear. This last one is actually kinda redundant, it is the same as reaching the end of instruction buffer, and is, unless I introduce a bug, the last instruction in it.

The next thing

It's the new module and widget editor. I still have no good way to create modules and widgets, "good" meaning "doesn't make your eyes and fingers hurt".

Finger hurtin' bad!

Finger hurtin' bad!

Making one is a whole bunch of UI/UX work, which I'm pretty bad at, but it is definitely coming along. So, stay tuned for the updates, i.e. follow me at hjvt@hachyderm.io or star and watch the project on Github.